Improved Image Retrieval Using Visual Sorting and Semi-Automatic Semantic Categorization of Images

Authors: Kai Uwe Barthel, Sebastian Richter, Anuj Goyal and Andreas Follmann

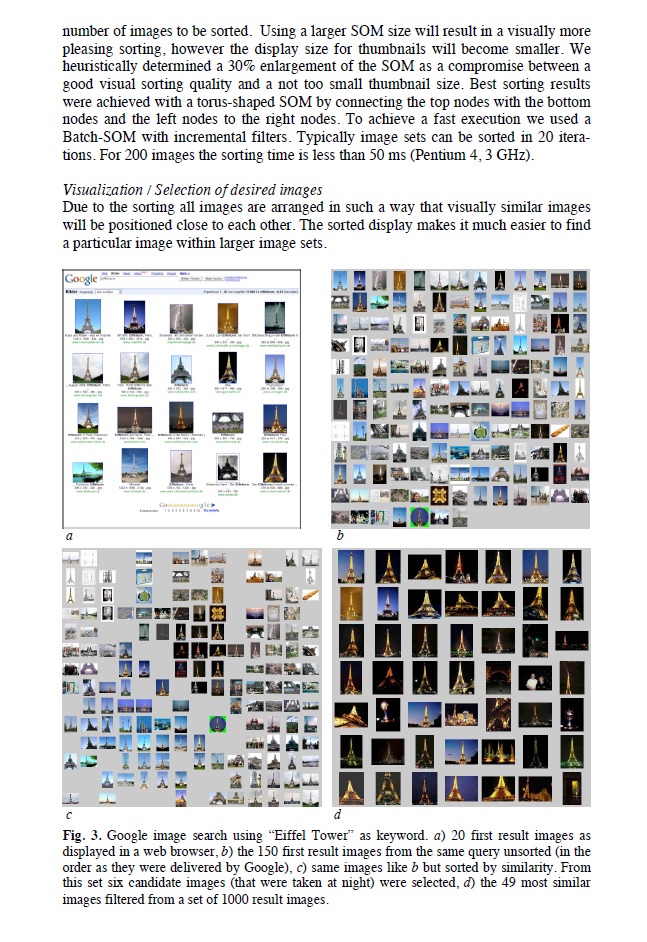

Abstract: The increasing use of digital images has led to the growing problem of how to organize these images efficiently for search and retrieval. Interpretation of what we see in images is hard to characterize, and even more so to teach a machine such that any automated organization can be possible. Due to this, both keyword-based Internet image search systems and content-based image retrieval systems are not capable of searching images according to the human high-level semantics of images. In this paper we propose a new image search system using keyword annotations, low-level visual metadata and semantic inter- image relationships. The semantic relationships are learned exclusively from the human users’ interaction with the image search system. Our system can be used to search huge (web-based) image sets more efficiently. However, the most important advantage of the new system is that it can be used to generate semi-automatically semantic relationships between the images.

Reference: www.scitepress.org/PublicationsDetail.aspx?ID=FhuQ+gAMKPc=